Abstract

With recent advancements in large-scale pre-trained text-to-image (T2I) models, training-free image editing methods have demonstrated remarkable success. Typically, these methods involve adding noise to a clean image via an inversion process, followed by separate denoising steps for the reconstruction and editing paths during the forward process. However, since the reconstruction path is approximated using noisy latents from mismatched timesteps, existing methods inevitably suffer from accumulated drift, which fundamentally limits reconstruction fidelity. To address this challenge, we systematically analyze the inversion process within the flow transformer and propose DirectEdit, a simple yet effective editing method that eliminates the inherent reconstruction error without introducing additional neural function evaluations (NFEs). Unlike most prior works that attempt to rectify the inversion path, DirectEdit focuses on directly aligning the forward paths, enabling precise reconstruction and reliable feature sharing. Furthermore, we introduce a preservation mechanism based on attention feature injection and multi-branch mask-guided noise blending, which effectively balances fidelity and editability. Extensive experiments across diverse scenarios demonstrate that DirectEdit achieves efficient and accurate image editing, delivering superior performance that outperforms state-of-the-art methods.

Method Overview

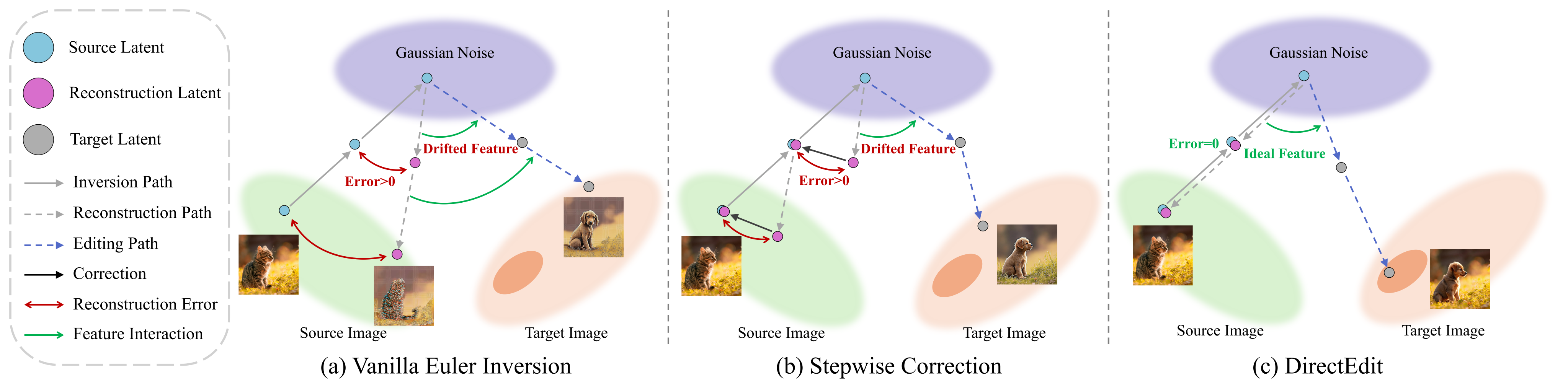

Comparison of Inversion Methods. DirectEdit eliminates the inherent reconstruction error of inversion, achieving reliable feature sharing.

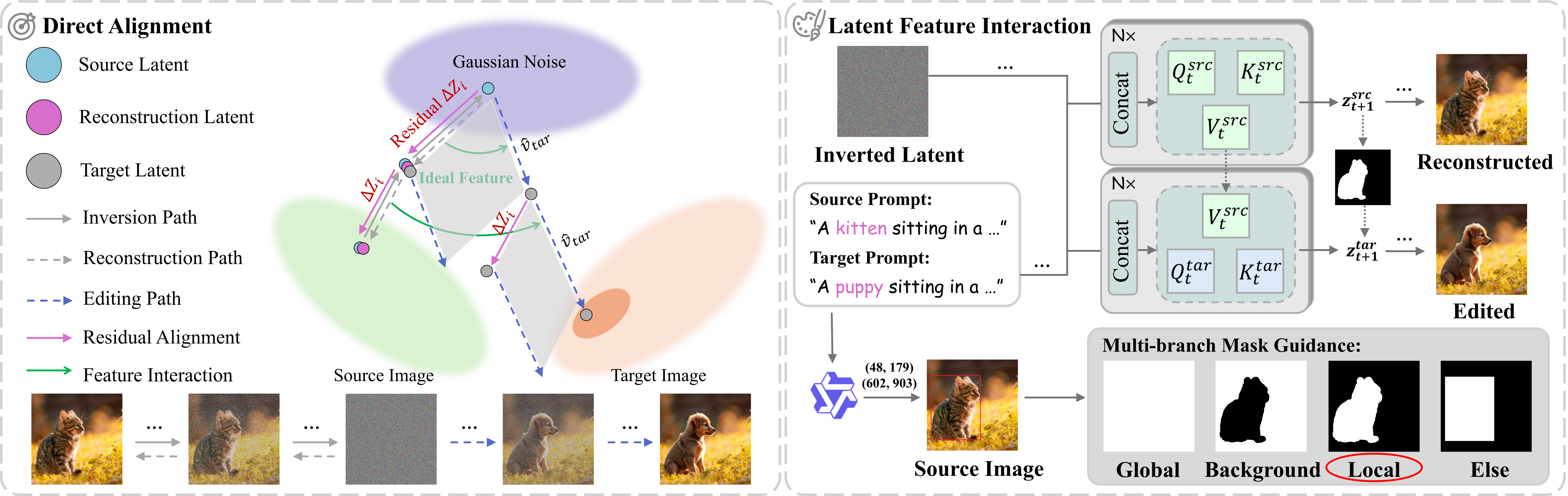

Overview of DirectEdit.

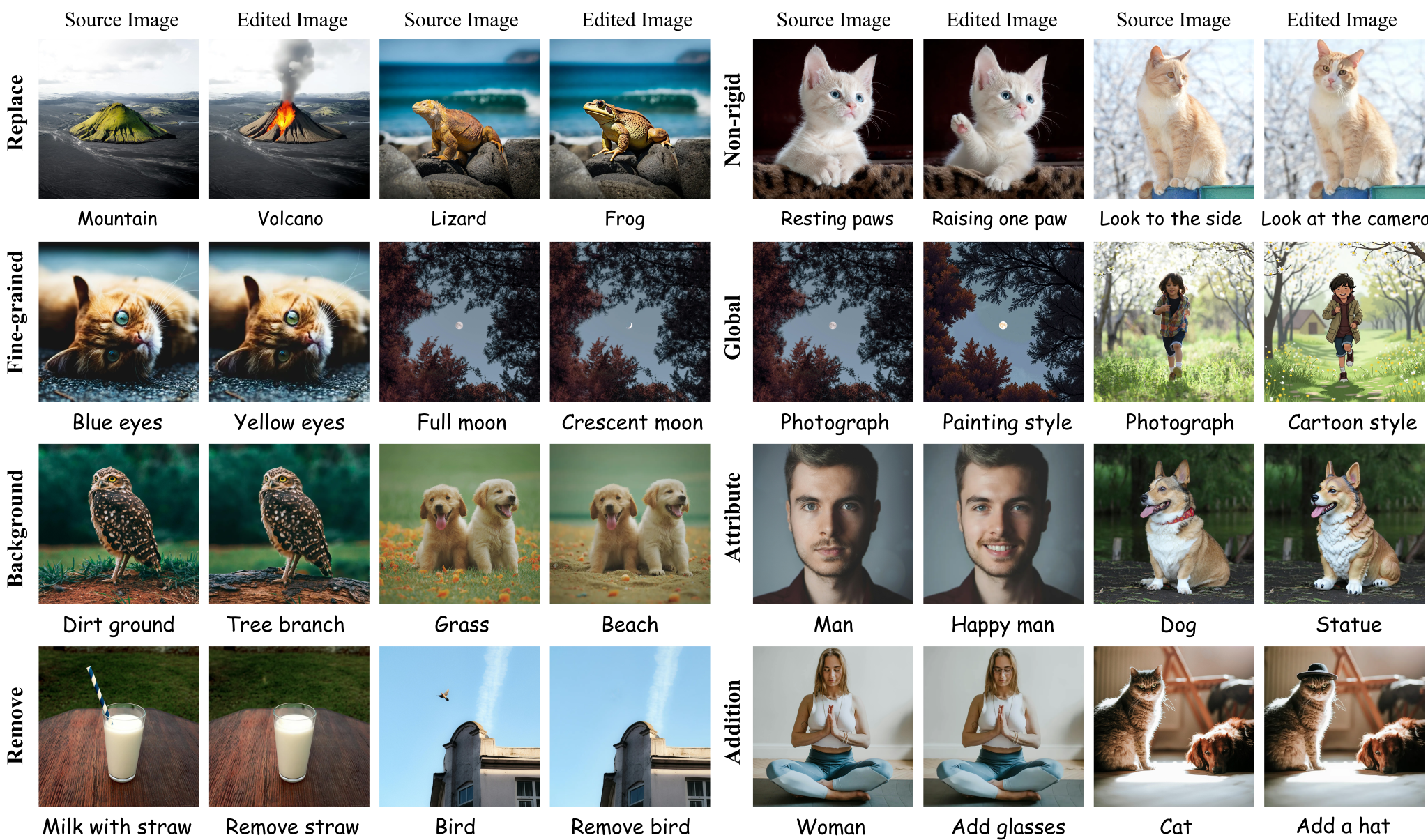

Results of Real Image Editing

Editing results of DirectEdit on high-resolution real-world images.

BibTeX

@article{yang2026directedit,

title={DirectEdit: Step-Level Accurate Inversion for Flow-Based Image Editing},

author={Yang, Desong and Ye, Mang},

journal={arXiv preprint arXiv:2605.02417},

year={2026}

}